FPGAs go far beyond the current architecture to provide design architecture for future ASICs

Compared with the previous self, the current FPGA is no longer just a collection of look-up tables (LUTs) and registers, but has gone far beyond the exploration of the current architecture to provide a design architecture for future ASICs.

The devices now range from basic programmable logic to complex SoCs. In a variety of applications, including automotive, AI, enterprise networking, aerospace, defense, and industrial automation, FPGAs enable chipmakers to implement systems in ways that can be updated as needed. This flexibility is critical in agreements, standards and best practices that are still evolving and new markets that require ECOS to remain competitive.

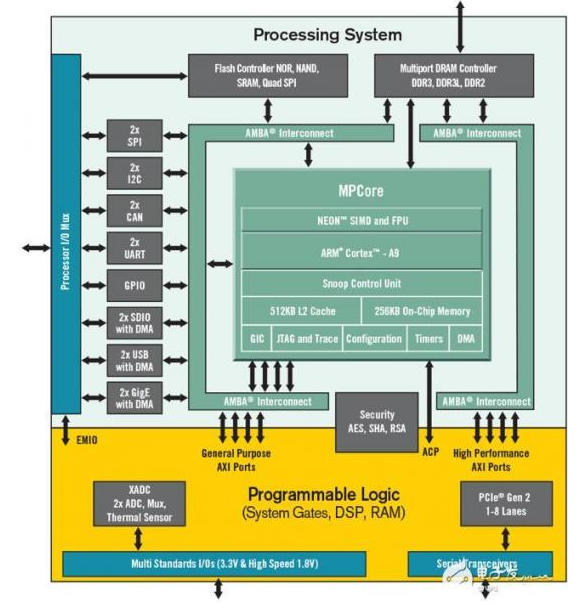

Alie marketing director Louie de Luna said that this is why Xilinx decided to add an Arm core to its Zynq FPGA to create an FPGA SoC. “The most important thing is that the vendor has improved the tool flow. This has generated a lot of interest in Zynq. Their SDSoC development environment looks like C, which is good for developers because the application is usually C Languages are written. So they use software features and allow users to assign these functions to the hardware.

Xilinx’s Zynq-7000 SoC. Source: Xilinx

Some of these FPGAs are more than just SoC-like. They are SoCs themselves.

"They may include multiple embedded processors, dedicated computing engines, complex interfaces, mass storage, and more," said Muhammad Khan, Integrated Verification Product Specialist, OneSpin SoluTIons. “System architects plan and use the resources available to FPGAs, just as they do for ASICs. Design teams use synthesis tools to map their SystemVerilog, VHDL or SystemC RTL code into the underlying logic elements. For most design processes The difference between effectively targeting FPGAs and targeting ASICs or full-custom chips is shrinking."

ArterisIP Chief Technology Officer Ty Garibay is very familiar with this evolution. “Historically, Xilinx started the Zynq road in 2010, and they defined a product that incorporates the Arm SoC hard macro into existing FPGAs,” he said. "Then Intel (Altera) hired me to do the same thing. The value proposition is that the SoC subsystem is what many customers want, but because of the characteristics of SoCs, especially processors, they are not suitable for synthesis on FPGAs. The ability to embed functionality into actual programmable logic is prohibitive because it uses the entire FPGA for this function, but it can be used as a small part or a small part of the entire FPGA chip as a hard feature. The ability to provide true reconfigurable logic for SoCs, but it can be programmed as software to change functionality in this way.

This means that there are software-programmable features, hard macros and hardware programmable features in the architecture that they can work with, he said. "There are some pretty good markets, especially in the low-cost automotive control arena, in any case, traditionally placed a medium-performance microcontroller device next to the FPGA. Customers will only say that I just put the entire function into the FPGA. The hard macro of the chip reduces board space, reduces BOM, and reduces power consumption."

This is in line with the development of FPGAs over the past 30 years. The original FPGAs were just programmable structures and a bunch of I/O. Over time, the memory controller is hardened with SerDes, RAM, DSP and HBM controllers.

Garibay said: "FPGA vendors have continued to increase chip area, but continue to add more and more hard logic, which is widely used by a large percentage of the customer base." "What happened today is to extend it to the software." Programmable side. Most of the things added before this ARM SoC are different forms of hardware, mainly related to I / O, but also include DSP. It is meaningful to save programmable logic gates by hardening them because there are Adequate planning utility."

a point of view

This has basically turned the FPGA into a Swiss Army knife.

"If you shorten the time, it's just a bunch of LUTs and registers, not gates," said Anush Mohandass, vice president of marketing and business development at NetSpeed Systems. "They have a classic problem. If you compare any generic task to its application-specific version, then general-purpose computing will provide more flexibility, while application-specific computing will provide some performance or efficiency advantages. Xilinx and Intel (Altera) are trying to get more and more aligned with them, they notice that almost every FPGA customer has DSP and some form of computing. So they joined the Arm kernel, they joined the DSP core, they joined All the different PHYs and common things. They reinforce this, which makes it more efficient and the performance curve gets better."

These new features open the door for FPGAs to play an important role in a variety of emerging and existing markets.

"From a market perspective, you can see that FPGAs will definitely enter the SoC market," said Piyush SancheTI, senior marketing director at Synopsys. “Whether you are doing an FPGA or a mature ASIC is economical. These lines are beginning to blur, and we certainly see more and more companies – especially in certain markets – are developing FPGAs that are more economical. Production area."

Historically, FPGAs have been used for prototyping, but for production use, it is limited to markets such as aerospace, defense, and communications infrastructure, SancheTI said. “The market is now expanding into automotive, industrial automation and medical equipment.”

AI, this is a thriving FPGA market

Some companies that use FPGAs are system vendors/OEMs who want to optimize the performance of their IP or AI/ML algorithms.

"NetSpeed's Mohandass said: "They want to develop their own chips, and many of them are starting to do ASICs, which may be a little scary. “They may not want to spend $30 million on wafer costs to get the chips. For them, FPGAs are an effective entry point, they have unique algorithms, their own neural networks, they can see if it can provide The performance they expect."

Stuart Clubb, senior product marketing manager for Catapult HLS Synthesis and Verification at Mentor, Siemens, said the current challenge for AI applications is quantification. "What kind of network do you need? How do I build this network? What is the memory architecture? Starting with the network, even if you have only a few layers, and you have a lot of data with many coefficients, it will quickly turn into millions of coefficients. And the storage bandwidth becomes terrible. No one really knows what the right architecture is. If the answer is not known, you won't jump in to build an ASIC."

In the corporate network space, the most common problem is that password standards seem to be changing. Mohandass said: "Rather than trying to build an ASIC, it's better to put it in the FPGA and make the crypto engine better." "Or, if you do any type of packet processing on the global network, FPGA still gives you more Flexibility and more programmability. That's where flexibility comes in and they've used it. You can still call it heterogeneous computing, it still looks like an SoC."

New rule

With the use of next-generation FPGA SoCs, the old rules no longer apply. "Specifically, if you debug on the board, you're doing it wrong," Clubb pointed out. “Although debugging on the development board is considered a lower cost solution, it goes back to the early stages of being able to say: 'It is programmable, you can place an oscilloscope on it, you can view it and See what happened. But now say: 'If I find an error, I can fix it, write a new bitstream in a day, then put it back on the board and find the next error,' This is crazy. This is a lot of mentality you see in the field where employees' time is seen as not cost. Management will not buy simulators or system level tools or debuggers because 'I just pay for this person to complete Work, and I will scream at him until he works hard."

He said that this behavior is still very common, because there are enough companies to make everyone down to earth with a 10% annual decline.

However, FPGA SoCs are true SoCs that require rigorous design and verification methods. "The fact that the structure is programmable doesn't really affect design and verification," Clubb said. "If you make SoC, yes, you can follow the 'Lego' project that some of the customers I have heard. This is the block diagram method. I need a processor, a memory, a GPU, some other parts, a DMA memory. Controllers, WiFi, USB and PCI. These are the 'Lego' blocks you assemble. The trouble is that you have to verify their work and they work together."

Despite this, FPGA SoC system developers are rapidly catching up with the SoC systems that their verification methods focus on.

"They are not as advanced as traditional chip SoC developers. Their thinking is 'this will cost me $2 million, so I'd better be prepared' because the cost of [using FPGAs] is lower," Clubb Say. "But if you spend $2 million to develop an FPGA and you get it wrong, now you will spend three months fixing these bugs, but there are still problems to solve. How big is the team? How much does it cost? What are the penalties? These are the costs that are very difficult to quantify. If you are in the consumer space, then during the Christmas period you really care about how to use the FPGA is almost impossible, so this has a different priority. In the custom chip Complete the overall cost and risk of the SoC and pull the trigger. Also say: 'This is my system, I am done', you can't see that much. As we all know, the industry is consolidating, and the big chips are big names. There are fewer and fewer players. Everyone must find a way to achieve this, and these FPGAs are achieving this goal."

New compromise

SancheTI said it is not uncommon for engineering teams to design their intentions to make their choices available to target devices. “We have seen many companies create and validate RTL, and they don’t know if they want to do FPGA or ASIC, because many times this decision may change. You can start with FPGA. If you reach a certain amount, the economy may have Good for debugging ASICs."

This is especially true for today's AI application space.

Mike Gianfagna, vice president of marketing at eSilicon, said: "The technology for accelerating AI algorithms is evolving. "Obviously, artificial intelligence algorithms have been around for a long time, but now we suddenly become more complex in how we use them, and in near real time. The ability to run them at speed is amazing. It starts with the CPU and then moves to the GPU. But even the GPU is a programmable device, so it has a certain versatility. Although the architecture is good at parallel processing, it is convenient because it is the whole content of graphics acceleration, because this is the whole content of AI. It is good for a long time, but it is still a universal method. So you can get a certain level of performance and power consumption. Some people will turn to the FPGA next, because you can better position the circuit than using the GPU, and the performance and power efficiency are improved. ASICs are the ultimate in power and performance because you have a fully customizable architecture that fits your needs perfectly. This is obviously the best. ”

Artificial intelligence algorithms are difficult to map to chips because they are in an almost constant state. So doing a fully custom ASIC at this point is not an option because it has expired when the chip is shipped. "FPGAs are very good at this because you can reprogram them, so even if it costs a lot of money, it won't be outdated and your money won't be lost," Gianfagna said.

Here are some custom memory configurations, as well as some subsystem features, such as convolution and transpose memory, which can be used again, so although the algorithm may change, some blocks will not change and/or again and again. Use once. With this in mind, eSilicon is developing software analysis capabilities to view AI algorithms. The goal is to be able to choose the best architecture for a particular application more quickly.

“FGPA gives you the flexibility to change your machine or engine, because you may encounter a new type of network. Submitting an ASIC is very risky. In this sense, you may not have the best support, so you There can be such flexibility,” said Deepak Sabharwal, vice president of intellectual property engineering at eSilicon. "However, FPGAs are always limited in terms of capacity and performance, so FPGAs can't really reach product-level specifications, and you'll end up having to go to ASICs."

Embedded LUT

Another option that has made progress over the past few years is the embedded FPGA, which integrates programmability into the ASIC while adding the performance and power advantages of the ASIC to the FPGA.

Geoff Tate, CEO of Flex Logix, said: "The FPGA SoC is still mainly dealing with FPGAs with relatively small chip areas. "In the block diagram, the scales look different, but in actual photos, mainly FPGAs. But there is a class of applications and customers, the right ratio between FPGA logic and the rest of the SoC is to have a smaller FPGA, making their RTL programmability a more cost-effective chip size. ”

This approach is looking for traction in areas such as aerospace, wireless base stations, telecommunications, networking, automotive and visual processing, especially artificial intelligence. "The algorithms changed so fast that the chips were almost out of date when they came back," Tate said. “With some embedded FPGAs, it allows them to iterate their algorithms faster.”

In this case, Nijssen says that programmability is critical to avoid re-creating the entire chip or module.

Debug design

As with all SoCs, understanding how to debug these systems and building instruments can help you spot problems before they are discovered.

"As system FPGAs become more like SoCs, they need the development and debugging methods they expect in SoCs," said UltraSoC CEO Rupert Baines. One (maybe naive) thinks that because you can see anything in the FPGA, it's easy to debug. This is correct at the bit level of the Waveform Viewer, but not at the system level. The latest large FPGAs are clearly system level. At this point, the waveform-level view you get from the position detector type arrangement is not very useful. You need a logic analyzer, a protocol analyzer, and good debugging and tracing capabilities for the processor core itself. ”

The size and complexity of the FPGA requires that the verification process be similar to an ASIC. Advanced UVM-based test platforms support simulation and are often supported by simulation. Formal tools play a key role here, from automated design checks to assertion-based verification. While it's true that FPGAs can be changed faster and cheaper than ASICs, the difficulty of detecting and diagnosing errors in large SoCs means thorough verification before entering the lab, OneSpin's Khan said.

In fact, in one area, the verification requirements for FPGA SoCs may be more demanding than for ASIC equivalence checks between RTL inputs and integrated netlists. Compared to traditional ASIC logic synthesis processes, the refinement, synthesis, and optimization phases of FPGAs often make more modifications to the design. These changes may include moving logic across cycle boundaries and implementing registers in the memory structure. Khan adds that a thorough sequential equivalence check is critical to ensure that the final FPGA design is still consistent with the original designer intent in RTL.

In terms of tools, there is room to optimize performance. “With embedded visual applications, many of which are written for Zynq, you might get 5 frames per second. But if you accelerate on hardware, you might get 25 to 30 frames per second. This is a new device shop. The problem is that the simulation and verification of these devices is not simple. You need integration between software and hardware, which is very difficult. If you run everything in the SoC, it is too slow. Each simulation may take five to Seven hours. If you cooperate with the simulation, you can save time," Aldec de Luna said.

In short, the same type of methods used in complex ASICs are now being used in complex FPGAs. This becomes more and more important as these devices are used for functional safety type applications.

"This is the purpose of formal analysis to ensure that there are erroneous propagation paths and then verify these paths," said Cadence Marketing Director Adam Sherer. “These things are great for formal analysis. Traditional FPGA verification methods do make these types of verification tasks almost impossible. In FPGA design, it is still very popular, assuming it is very fast and easy to perform hardware tests at system speed Run and perform a integrity check with a simple simulation level. Then you program the device, enter the lab and start running. This is a relatively fast path, but the observability and controllability in the lab Extremely limited. This is because it can only be probed from the inside of the FPGA to the pin so that you can see them on the tester."

Dave Kelf, chief marketing officer of Breker VerificaTIon Systems, agrees. “This has made an interesting shift in the way these devices are validated. In the past, by loading the design into the FPGA itself and running it on the test card in real time, you can verify as many smaller devices as possible. With SoC and software driver design The emergence of this "self-designed prototype" verification method may be expected to apply to software-driven technology and may be applicable to some stages of the process. However, identifying and debugging problems during the prototyping process is very complicated. The early verification phase requires simulation, so SoC-type FPGAs look more and more like ASICs. Considering this two-stage process, the versatility between them makes the process more efficient and includes common debugging and testing platforms. Progress will provide this versatility and, in fact, make SoC FPGAs easier to manage."

in conclusion

Looking ahead, Sheerer said that users are looking to apply the more rigorous processes currently used in the ASIC field to FPGA processes.

“There is a lot of training and analysis, and they want more technology in the FPGA for debugging to support this level,” he said. “The FPGA community tends to lag behind existing technologies and tend to use very traditional methods, so they need to be trained and understood in terms of space, planning and management, and demand traceability. Those elements from the SoC process are definitely in FPGAs. What is required is not as much as the FPGA itself drives it, but these industry standards in the final application are driving it. This is a realignment and re-education for engineers who have been working in an FPGA environment."

The boundaries between ASICs and FPGAs are blurring, driven by applications that require flexibility, and the system architecture combines programmability with hardwired logic and the tools that are now being applied to both. And this trend is unlikely to change very quickly, as many new application areas that require these combinations are still in their infancy.