What are the potential applications of sensor fusion?

Although the concept of sensor fusion has long been proposed, it has only recently begun to really see actual scale applications. In fact, sensor fusion has rapidly evolved into a hot trend, starting with the origin of smartphones and portable devices, and now expanding into a wide range of IoT sensors, next-generation autonomous vehicles and UAV environment-aware applications.

This explosive growth presents opportunities and, of course, presents many challenges, not only purely technical challenges, but also privacy, security, and broader implications for future infrastructure development.

The definition of sensor fusion is relatively simple. It is essentially a software that intelligently integrates a series of sensor data, and then uses the integration results to improve performance. It can use the same or similar types of sensor arrays to achieve extremely high precision measurements. Integrate different types of sensor inputs to achieve more complex functions.

The largest demand in the consumer electronics sector

The potential applications of sensor fusion are very broad, and industry analysts are very optimistic. According to Memes Consulting, sensor fusion system demand is expected to grow at a compound annual growth rate (CAGR) of approximately 19.4% over the next five years, and market size is expected to increase from $2.62 billion in 2017 to $7.58 billion in 2023. . In 2016, North America was the largest production base in the sensor fusion market with a market share of approximately 32.84%, while the European market share exceeded 31.51%.

Although traditional use cases for sensor fusion are more industrial applications, there has been a major shift in customer base in recent years. In 2016, 54.86% of sensor fusion systems market demand came from the consumer electronics industry.

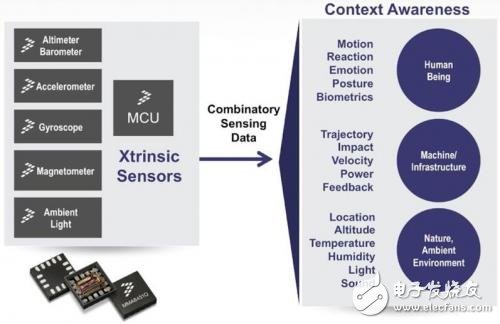

Sensor Fusion for Context Awareness

The growing utility of sensor hubs, a combination of hardware and software that includes MCUs, is driving the huge demand in the consumer electronics industry. In contrast to pure software "sensor fusion," the sensor hub implements a specific sensor fusion algorithm for a specific set of sensors, involving a wide range of sensor types and algorithms. Hardware-based sensor hubs alleviate the heavy burden on the system's CPU, which is useful for modern devices from smartphones to wearables. In fact, reducing CPU load can extend battery life and reduce heat, both of which are key challenges for wearables and smartphone designers.

For example, Google has launched an Android sensor hub designed to connect directly to smartphone sensors such as biometric sensors, accelerometers and gyroscopes. Its micro-processor running Google's custom algorithm can independently interpret gestures and activities without consuming the resources of the main CPU.

So far, this sensor hub has been integrated into countless Android and Apple iPhones. As part of the Qualcomm Snapdragon chipset, it has also entered a large number of wearable devices and smart home devices. In these use cases, the battery Life is always vital.

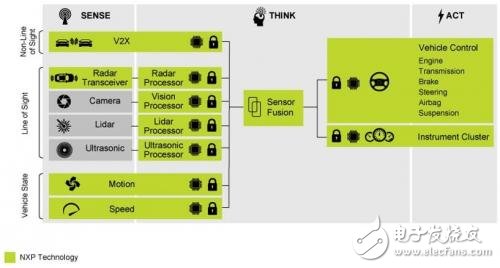

Autopilot application

NXP Automotive Sensor Fusion System

Another major market for sensor fusion is the automotive industry, such as automotive anti-collision systems, where a variety of different sensors can be used, including pressure sensors, accelerometers, gyroscopes, and ultrasonic sensors. If the sensor combination reaches the threshold, the corresponding response can be automatically executed (eg starting the relevant airbag). Most current Level 3 autopilot vehicles rely on sensor fusion to integrate lidar with sensors such as visible light cameras, infrared cameras, ultrasonic sensors and radar arrays. These sensors can generate up to tens of millions of points per second, which need to be processed in real time for obvious security reasons.

The goal of the autonomous driving industry is to gradually move to the human driver with less Level 4 and Level 5 (not required at Level 5), and the reliability requirements for sensors, sensor fusion hardware/software and processors are constantly increasing. Increase, this requires a higher level than smartphones and wearables. Obviously, the smart watch restarts itself in the event of a malfunction, which is quite different from the anti-collision system of the Level 5 self-driving car on the highway. Reliability of the entire system is a complex challenge, as sensor fusion provides faster, more efficient monitoring of environmental variables, which means that abnormal inputs from a single sensor can trigger a safety system, which requires automotive design. People ensure effective input and redundancy in all parts of the system.

Perhaps the most important advantage of sensor fusion for future autonomous driving is the redundant environment perception provided, using a variety of different sensing technologies to interpret their environment. For example, lidar and radar systems in autonomous vertical applications, as well as pressure sensors in drone systems, are important tools for flight control and positioning in the case of unreliable GPS signals.

Smart city application

This derivative environment for sensor fusion is particularly important in the development of IoT and smart home/smart cities. Once these networked “silent” sensors are successfully integrated, they can be built into a city-scale response system. If household data is used in an identifiable manner, there are of course privacy and security issues, as well as broader public safety issues in city-wide systems. However, early test networks designed for urban air pollution (eg, benzene and particulate matter) monitoring, built on in-vehicle systems, building integration, and dedicated monitoring stations, can automatically alert and channel traffic flows, and have shown tremendous application prospects.

For example, a plan to monitor London's pollution levels, launched in July 2018, integrates 100 fixed sensors in the most polluted areas and two specially modified Google Street View cars to track the level of pollution on the streets of London. The two Google Street View cars collect air quality readings every 30 meters to mark the “hot spots” of London pollution through accumulated data for a year.

Artificial intelligence power sensor fusion

Of course, in addition to the challenges of extracting useful data from various sensors in various embodiments, there are many other challenges, particularly those that reliably respond to environmental changes, any of which may increase device error during data collection, Noise and defects.

A few years ago, it was almost impossible to smoothly handle these errors, but with the rise of relatively economical machine learning and artificial intelligence (AI) tools, the true potential of sensor fusion has grown exponentially. Of course, the prospects for artificial intelligence technology applications are even more gratifying, creating new use cases for sensor suppliers and designers, creating a new market. In the short term, artificial intelligence and sensor fusion can minimize security risks through enhanced local data processing, significantly reducing the need to securely transfer, process, and store off-site individual data. This can be a vital value, reducing business risks and indirect costs, and providing clearer benefits to end users.

Obviously, in the future we will see more and more connected sensors embedded in our vehicles, homes and cities. Adding context to these rapidly expanding data streams will make the need for true sensor convergence even more urgent. Once fused, these data will empower existing applications and unlock new services, from consumer-oriented health and recreation to improved supply chain management efficiency, as well as faster, more convenient, less polluting transportation networks.